The Solution

ShopAssist: Dual-Layer Accessibility System

ShopAssist is a revolutionary smart lens device—lightweight glasses integrating HD camera, millimeter-wave radar, and bone conduction speakers—that provides completely hands-free navigation, obstacle detection, and product identification for truly independent shopping.

System Architecture

Layer 1: Physical Infrastructure

- Tactile floor markers at store entrance

- Bluetooth beacons on shelves

- High-contrast, large-print aisle signage

- Audio wayfinding at key locations

- Accessible basket/trolley pickup

Layer 2: ShopAssist Smart Lens

- Integrated HD camera for product recognition

- Millimeter-wave radar for obstacle detection

- Bone conduction audio for private voice guidance

- 8-hour battery life, lightweight frame

- Hands-free operation, natural head movements

Layer 3: Staff Support Integration

- Alert system for assistance requests

- Staff dashboard showing user location

- Training modules for staff

- Feedback loop for improvements

- Emergency assistance button

How It Works

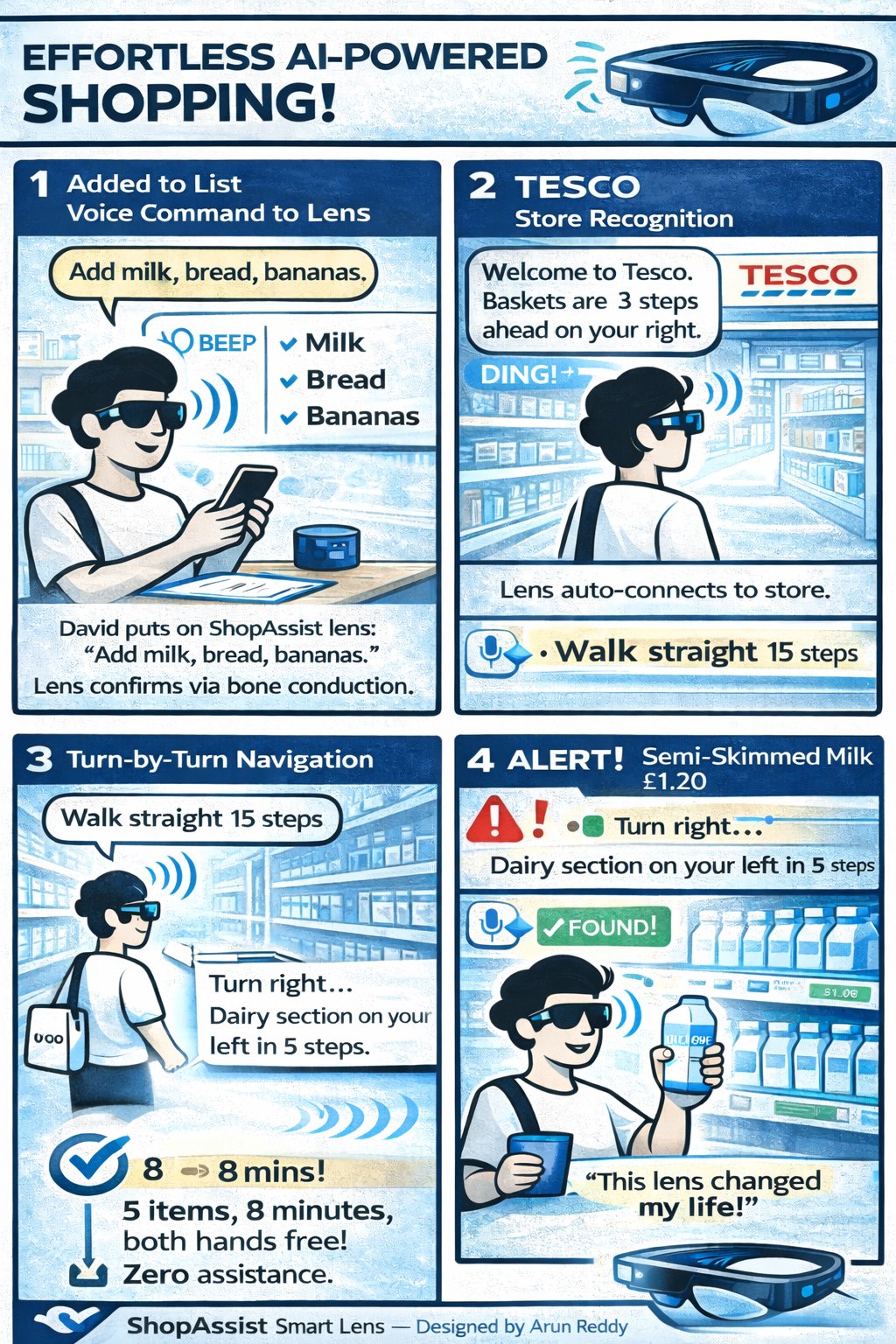

Follow David's shopping journey with ShopAssist in this visual guide.

Key Features

1. Turn-by-Turn Navigation

How it works: The lens uses store map data + Bluetooth beacons + onboard sensors to provide precise indoor navigation through bone conduction audio—completely hands-free.

Voice guidance examples:

- "Walk forward 10 steps"

- "Turn right at the end of the aisle"

- "Dairy section is on your left in 5 steps"

- "You've arrived at the milk shelf"

User benefit: Navigate unfamiliar stores confidently without memorization or assistance.

2. Real-Time Obstacle Detection

How it works: Integrated millimeter-wave radar + HD camera with AI vision analyzes environment 60 times per second, detecting obstacles even in low light or peripheral vision.

Detects and alerts for:

- Shopping carts in path: "Cart 2 meters ahead, move slightly left"

- People blocking aisle: "Person standing ahead, please wait"

- Wet floor signs: "Caution: wet floor ahead, walk carefully"

- Promotional displays: "Obstacle detected, move right to avoid"

- Store staff restocking: "Staff member kneeling ahead on right"

User benefit: Shop safely without collisions or falls.

3. Product Identification

How it works: Simply look at the shelf. The lens camera automatically identifies products in your field of view and announces details through bone conduction.

Information provided:

- "Tesco Semi-Skimmed Milk, 2 pints, £1.20"

- "Hovis Wholemeal Bread, 800g, £1.05, best before 18th Jan"

- "Heinz Tomato Soup, 400g tin, £0.85, on offer - was £1.10"

User benefit: Choose exactly what you want, compare prices, check expiry dates independently.

4. Barcode Scanner

How it works: Pick up a product and look at it. The lens automatically detects and scans barcodes, reading full product information aloud.

Provides full details:

- Product name and brand

- Price and any offers

- Nutritional information (calories, allergens)

- Ingredients list

- Expiry/best before date

User benefit: Make informed purchasing decisions, check for allergens, avoid expired products.

5. Shopping List Integration

How it works: Create your list via voice command at home. The lens guides you to each item in optimal route—completely hands-free navigation.

Features:

- Voice input: "Add milk and bread to my list"

- Smart routing: Optimizes path to minimize backtracking

- Item tracking: "3 of 5 items found"

- Suggestions: "Butter is on offer today, add to list?"

User benefit: Efficient shopping, never forget items, guided to everything you need.

6. Accessible Checkout Guidance

How it works: Lens identifies checkout area, counts people in queues, and guides you to the shortest line with real-time position updates.

Guidance provided:

- "Checkout area ahead. 3 tills open."

- "Shortest queue: till 2, walk straight 8 steps"

- "2 people ahead of you in queue"

- "Next customer, please move forward to till"

- For accessible tills: "Accessible till 5 available with no queue"

User benefit: Find checkout quickly, know when it's your turn, choose accessible options.